Content Marketing

Amping Your Blog’s Visuals, Step By Step

Good Morning!

A recent report found that page views were 94 percent higher for pages with visual content than pages that contained only text.

But images can be unexpectedly tough to nail. You want each visual to be relevant, but not cheesy; affective, rather than decorative.

Here’s an amazing list of image search tools that will get you going – just make sure they feel authentic. Also, be sure to check out cool photography blogs and tumblrs, which don’t usually pop up in search engines.

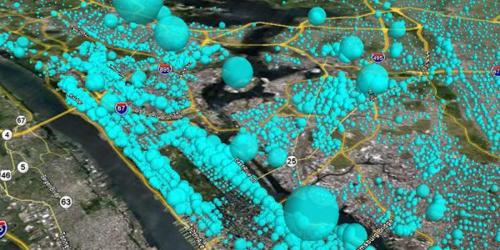

If you’ve trolled the internet and still can’t find the right graphic, you might want to think about creating your own. Grab a camera, or maybe throw together an infographic – with helpful tools, of course.

If it feels applicable to your brand, get your readers involved with a user-generated contest. These guys will help you set one up super easily.

K, Go Forth!

Get better at your job right now.

Read our monthly newsletter to master content marketing. It’s made for marketers, creators, and everyone in between.