Strategy

Best Practices for Generating Content Briefs for AI

Content marketing projects thrive or languish based on the creative brief. When we prime our creators with a compelling idea, a clear purpose, and a defined audience, they are far more likely to develop an outstanding piece of content than when we have a fuzzy concept poorly articulated. No doubt, the better the brief, the better the output.

Yet we’ve all written creative briefs that seemed clear to us, but the deliverable wasn’t what we wanted. A quick conversation with your colleagues is a great way to limit these misunderstandings.

But what if your colleague is a machine?

AI Fluency Is Becoming a Key Content Skill

Technology has laid the foundation for modern marketing, and generative AI is reinforcing the walls. Marketing teams are generating text, creating images, and producing video at a rapid pace. But generative AI is making this process more efficient. Innovative companies are already seeing the impact. AI rapidly produces content, images, and video, and Forrester predicts that 10% of Fortune 500 enterprises will adopt AI to generate content in 2023.

That means we, the human colleagues, must learn to communicate well with our machine colleagues. As writers, we need to learn how to “speak” AI with better creative briefs. Once mastered, it increases the odds our requests will produce assets that meet the needs of the business.

Let’s look at a few best practices for writing creative briefs for generative AI creators.

Best Practices for Writing Creative Briefs for Generative AI

1. Get specific.

Details matter when working with generative AI. I’ve used a language generation tool called Jasper.ai to demonstrate how the right request made in the right way improves your odds of getting text you can use in your blog post. Specifics matter just as much (if not more) for visual assets.

To illustrate, let’s see what happens with different kinds of prompts on DALL-E (now DALL-E 2), a generative AI for images. The nonprofit OpenAI and its GPT-3 large language model provide the foundation for DALL-E, and as of November 2022, anyone can access it. Users get 50 “credits” when they sign up. Each credit enables a user to input a visual prompt and receives four AI-generated images.

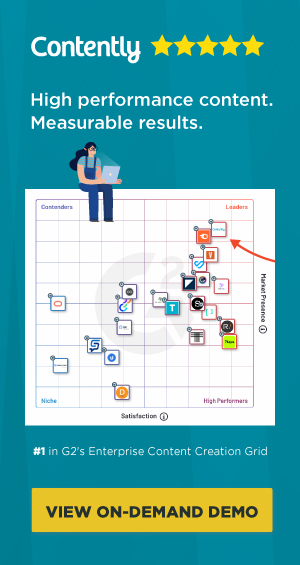

Prompts can be general, describing in simple terms what you want the image to show. For example, a prompt that says “dad with his child” gave me the following four options:

Because my request wasn’t super-specific, each image came in a different style (photography vs. digital art), palette (warm tones vs. cool tones), context (outdoor spaces vs. indeterminate), and angle (straight on, side views, or rear views), as well as the ethnic backgrounds of the people.

As content marketers, we often know what we want or need, at least as it pertains to our brand’s editorial guidelines and visual style—and we can include those details in our prompts for better results. In fact, an AI art website called DALL-Ery has published a free “prompt book” that teaches creatives how to write better prompts for DALL-E. That’s handy!

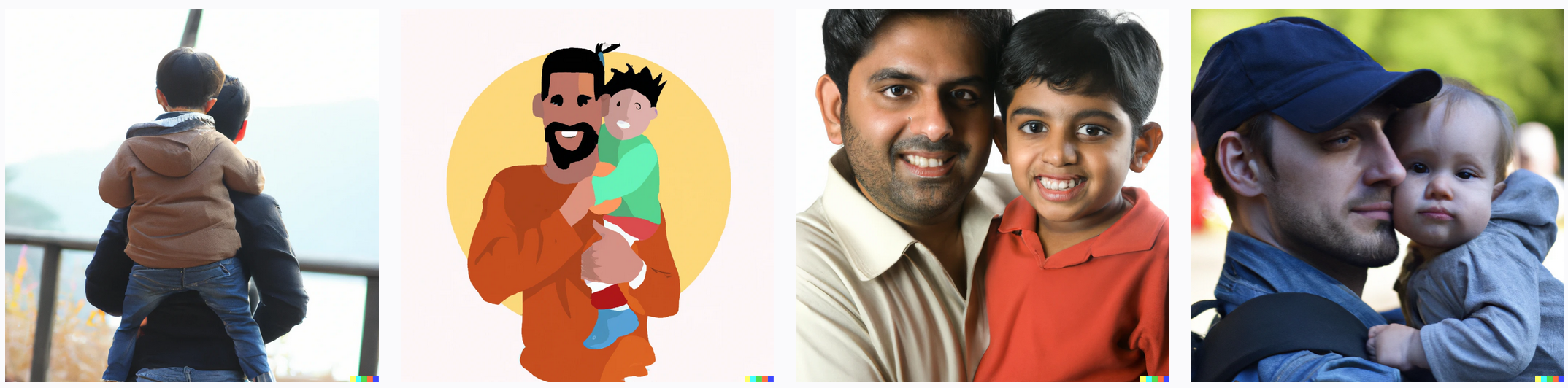

So what happens when I include more specifics in my prompt to read: “photorealistic painting of a dad with his child in silhouette watching the sunset on the beach”? The four options were more specific and recognizably similar:

Worth noting, however, are the problems DALL-E has with generating photographic human faces—the dad’s nose on the farthest right-hand image of the first set is clearly constructed from multiple images, as are his baby’s cheeks. Image generation tools also seem to struggle with hands—the child’s hands are visually “off” in all four of the lower set.

That brings me to my second best practice.

2. Use the right tool for the job.

DALL-E is just one of multiple generative AI tools for visual assets, just as Jasper.ai is just one for text. And, at the risk of stating the obvious, different tools have different specialties.

For example, Midjourney is another generative AI for visual outputs. But unlike DALL-E, which is democratic about aesthetics, Midjourney specializes in “pretty” images. As a server-based tool, its user experience is also more challenging to navigate than DALL-E’s.

Beyond the issue of aesthetics lies the question of what kind of output you need. In the world of visuals, business content regularly incorporates visual representations of workflows, concepts, or frameworks. When I tried to generate a workflow image with the specific prompt “workflow graphic of the content creation process including the following steps: ideation, delegation, creation, production, promotion, and measurement,” I got… this:

Maybe with more practice, I could write a more effective creative brief for my generative AI graphic designer, but it’s also possible the tools aren’t as thoroughly trained (today) on business visuals as on artistic or stock images.

3. Fine-tune your fact-checking.

Now for some bad news: AI content generators can produce images and text with mind-boggling speed. Yet these AI models only mimic the text and images they see in their training data. They’re unable to separate facts or reality from misinformation—for now. Consequently, AI-generated text and images can look and sound very authentic and authoritative yet are riddled with nonsense. One example I’ve seen includes an AI-generated statement the model made up but sounded like (and was attributed to) a well-known person.

So, be careful: Don’t publish AI-generated content without fact-checking it first.

4. Be wary of copyright.

Now for some bad news: the legalities around generative AI are fuzzy. In the case of visual images, OpenAI specifies in its user disclosures that the images it creates are not necessarily unique. The same prompt from two different users may result in a similar or nearly identical image. And though the platform gives creators commercial rights over the images they generate, buyer beware. Questions remain as to whether the data sets OpenAI used to train its model included copyrighted content. If so, complications will arise down the road about the legal status of images and text produced with the help of generative AI.

The rights issues are not trivial. But they also won’t be 100% clear for some time, and the commercial market is marching forward anyway. As for their quality, generative language and image AI tools will continue to improve as people use them. So it’s time for you to start exploring them and learning how to “speak” AI so you’re ready when the machines become part of your team.

Sort through this non-exhaustive list of generative AI applications, choose some to play with, and let us know how it goes!

Generative AI for Language

Jasper.ai: Based on the GPT-3 large language model developed by OpenAI, Jasper offers a subscription-based text generation AI service. Using clear prompts and designating rules related to the type of content and the tone of voice, Jasper can generate full paragraphs of fluent text to use as the basis of blog posts or emails.

Copy.ai: Also based on OpenAI’s GPT-3, Copy.ai similarly generates text for longer content assets like blog posts and emails. The output quality depends on how well you write specific and clear prompts. The platform (like others) also lets you define characteristics of the text, tone, or voice to get closer to the kind of writing you want.

ChatGPT: Just launched to the public in November, this one is not just based on GPT-3 but developed by the OpenAI team to produce conversational text. The fluency is very impressive, as is the ability to dictate “style” by naming a specific writer. Yet beware of “hallucinations”—statements delivered as authoritative facts that are, in fact, nonsense.

Copysmith.ai: This AI tool is specific to e-commerce companies and the paid advertising campaigns they create to drive conversions. The AI also has integrations with common content creation and analytics platforms to integrate into the typical content team workflow.

Persado: Unlike these other language AI solutions, which anyone can use, the Persado platform caters to large enterprises and has a proprietary language model with built-in capabilities to generate message variants, run multivariate experiments to identify the highest-performing version of a message, and personalize messages to high-value segments.

Generative AI for Visuals

Craiyon: Formerly known as DALL-E Mini, Craiyon is a basic web-based image generation application based on Google AI technology. Craiyon operates with an ad-and-user-donation model. The basic interface looks and feels like an open-source chat room from the early 1990s, and the functionality is more basic than what other platforms offer.

DALL-E: This image generation software has a straightforward web-based user interface and a helpful image gallery that serves as a clear model for how to write effective prompts. The outputs can look like stock photos, digital art, or paintings.

Midjourney: An “artistic” image generation software with a more chaotic, server-based, and community-driven user experience. Creators work in the same “room” with other creators to prompt their images and watch them render.

New generative AI tools are coming onto the market all the time. Experimenting with them can give you the knowledge and experience to perfect your AI creative briefs. You’ll also develop an informed opinion about when to use them and when human creativity is the better option.

To stay informed on all things content, subscribe to The Content Strategist for more insight on the latest news in digital transformation, content marketing strategy, and rising tech trends.

Image by BusbusGet better at your job right now.

Read our monthly newsletter to master content marketing. It’s made for marketers, creators, and everyone in between.