Media

Facebook Can’t Escape Bias, No Matter What It Says

I took my first journalism class freshman year of high school, back in 2004. Before students could write for the school paper, which came out in print once a month and didn’t exist online, they had to learn about the principles of reporting, then pass a test on the information. I passed on the first try, but more than a decade later, I’ve forgotten most of what we had to study.

The day we learned about bias, however, has stuck with me.

Reading clips from The New York Times and The Wall Street Journal, the teacher illustrated how a seemingly innocuous word could reveal a value judgment or political slant. His point was that bias exists everywhere. Even in hard news, there’s no such thing as absolute objectivity.

Two weeks ago, Facebook reminded me of that lesson when it updated its Trending feature and fired the editorial team of third-party contractors who worked on Trending.

The update and layoffs came a few months after the social network dealt with heavy criticism for allegedly omitting politically conservative news from its Trending section. Although, in the company’s press announcement, Facebook made a note that it “looked into these claims and found no evidence of systematic bias.”

Now, an algorithm dictates news curation instead. As Quartz reported, “The Trending team will now be staffed entirely by engineers, who will work to check that topics and articles surfaced by the algorithms are newsworthy.”

The decision brings up a crucial problem for Facebook: Traditional news—which the company has courted with features like Instant Articles and Facebook Live—doesn’t fit as cleanly into its product as expected.

There’s a confusing, contradictory shading to the company line here. Facebook wants to distance itself from the bad press by handing over more responsibility to its algorithm. Shifting blame (or credit) to an algorithm makes sense as a safe business move, but that’s not really what the company did. It just replaced editorial professionals, who are trained to decide what’s newsworthy, with engineers, who aren’t.

During an August Q&A, Facebook co-founder and CEO Mark Zuckerberg said, “We’re a tech company. We’re not a media company.” But Facebook dominates the internet precisely because it acts like both a tech company and a media company. Facebook put on its media company hat a few months ago when it paid publishers and influencers more than $50 million in exchange for them to create content on Facebook Live.

News exists in a gray area on the platform. The spread of content is a big reason why Facebook was able to bring in $5.2 billion from brands and publishers advertising in Q1. In the past few years, Facebook has arguably become more of a content-sharing network than a social network. Sharing among individuals on Facebook is down, yet according to a May 2016 Pew Research poll, 44 percent of U.S. adults get their news from Facebook.

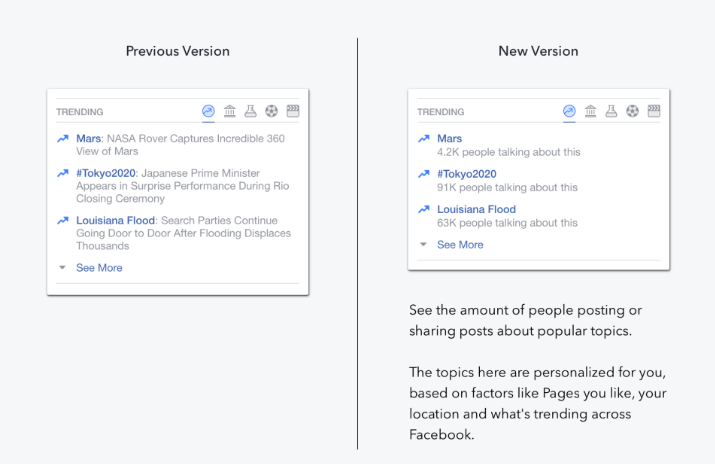

But with this new approach, the way content gets presented to an audience is dumbed down. Trending topics used to resemble traditional news headlines, with subheads providing a summary of the story. Now, the subheads are gone. That context has been reduced to keywords: Kanye West, Ann Coulter, Daft Punk Helmet. Now, Facebook has replaced the subheads with a counter that shows how many people are talking about a certain topic.

Without the subheads, the Trending section just resembles the cover of a tabloid. Facebook is likely hoping readers will just hover and click on names they already know, making news discovery even more of a popularity contest than it already was. It’s a way for the company to suck more attention time from its users in the most simplistic possible way. Yet less context is rarely a good thing when it comes to media.

The odd part is that the new Trending section doesn’t rank stories in any clear order. More than 1 million people may be talking about one topic, but right underneath, you’ll see a different topic only 1,000 people are talking about. In the announcement, Facebook said: “The list of topics you see is still personalized based on a number of factors, including Pages you’ve liked, your location (e.g., home state sports news), the previous trending topics with which you’ve interacted, and what is trending across Facebook overall.”

Essentially, Facebook’s algorithm prioritizes whatever it wants (which is okay under certain circumstances), but there’s little to no transparency as to how it happens (not okay). On August 30, a Verizon ad promoting a $100 discount off new phones allegedly ended up trending, with 110,000 people talking about it. Either Facebook took money from Verizon to promote the topic or Facebook’s algorithm isn’t filtering out ads. Regardless of which is true, that’s bad news.

http://twitter.com/AlanBleiweiss/status/770823237005484032

Facebook’s decision to remove the human editors has only served to make Trending unnecessarily problematic. I’d argue that Facebook would have actually benefitted by embracing the human element of news curation. There’s nothing wrong with admitting that judgment impacts what we read. Editors use that judgment when they arrange stories on the front page of a newspaper or homepage of a website.

There’s nothing wrong with admitting that judgment impacts what we read.

Or, if Facebook actually wants to be purely a tech company, it should just get rid of Trending entirely. That would make the most sense to eliminate any PR hassles. On mobile devices, the Trending topics are buried way down on the search function, and since a majority of Facebook’s active users only come from mobile devices, per VentureBeat, phasing it out wouldn’t be a shock to the user experience.

But Trending is still alive. Perhaps Facebook doesn’t want to admit defeat, which would likely cause another push of negative publicity. Or maybe it wants to follow in Twitter’s footsteps and make news discovery a more significant part of its mobile experience.

If that’s the case, then Facebook would be wise to look at Twitter—not something you hear every day.

Twitter has many flaws, but it’s always had a more sophisticated relationship with the news. Its Trends section also operates via algorithm, but there have never been accusations of it suppressing ideologies or ideas. While both Facebook and Twitter outline how their respective algorithms function, Twitter does so with more detail, going as far as explaining rules that prevent users from gaming the system.

Last year, Twitter also introduced Moments, which “features the best stories happening on Twitter, curated by Twitter and select partners.” Moments is a clear example of how a social network was able to show people curated news without setting off an ethical firestorm. The pairing of Trends and Moments gives Twitter what Facebook seems to be after: a trusted content ecosystem partially controlled by technology and partially governed by people.

As Facebook wrestles with its identity like an angsty high schooler, it could learn a thing or two from my freshman journalism class: Bias is just a system of preferences. You can try to limit obvious subjectivity, but no matter what you do, you can’t escape it.

Get better at your job right now.

Read our monthly newsletter to master content marketing. It’s made for marketers, creators, and everyone in between.